wget은 Linux 계열 운영체제에서 널리 사용되는 파일 다운로드 응용 프로그램이다.

왜 이러한 물건이 필요한지 의문스러울 수도 있지만, Linux 운영체제의 상당수는 데스크톱 환경을 사용하지 않는다. 순수 CLI 환경이나 TLI환경에서 구동되는데, 이러한 환경에서는 웹 브라우저 등의 편리한 도구를 사용할 수 없다. 따라서 이런 경우 Wget은 인터넷상에서 파일을 내려받을 수 있는 훌륭한 도구이다.

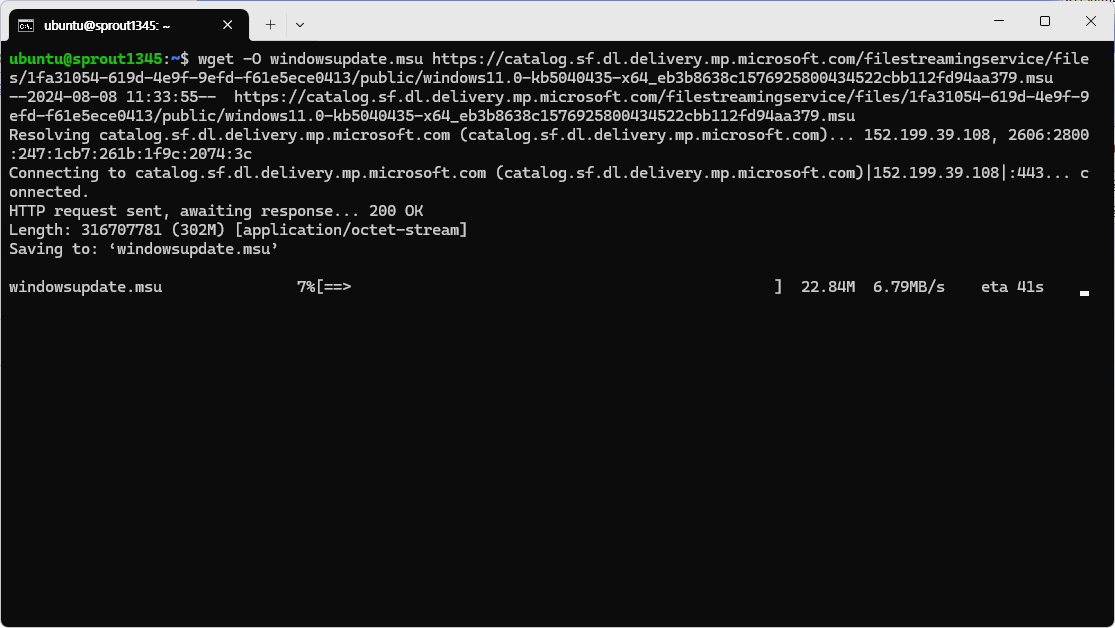

Windows 상에서는 사용할 일이 없을 것 같지만, Llama를 사용하기 위해 내려받을 때와 같이 언어 모델을 내려받을 때 Wget을 사용해야 한다. 물론 오픈소스 프로그램이기 때문에, Windows에서 사용할 수 있도록 포팅된 버전이 존재한다.

그러나, Wget을 언어 모델 내려받기에만 쓰고 버리는 것은 아까운 일이다. 상술하였듯이, Wget은 파일을 내려받는 데 사용되는 강력한 도구이기 때문에, Wget의 Windows 설치법, 그리고 간단한 사용법들을 이 글에서 안내하고자 한다.

Winget을 이용하여 Wget 설치

(당연하게도) Wget을 Windows에서 사용하고자 한다면 먼저 Wget을 내려받아야 한다.

이곳에서 Wget을 내려받을 수 있으나, 이 방법으로 내려받은 Wget은 사용하기 불편하다. Portable 프로그램이기에 프로그램 경로가 시스템에 등록되어 있지 않기 때문이다. 이 때문에 수동으로 시스템에 Wget의 경로를 등록하거나, Wget을 사용하려면 프로그램의 경로를 찾아 들어가는 수고를 들여야 한다. 이는 Wget의 장점인 간편함을 제대로 사용할 수 없게 만든다.

GNU Wget 1.21.4 for Windows

eternallybored.org

그러나 다행히도, Windows 버전 Wget 개발자가 프로그램을 Winget에도 올려놓았다. Winget을 통해 Wget을 설치하면 프로그램 등록과 업데이트까지 Winget이 다 해주기 때문에 Wget을 사용하기 매우 편리해진다.

아래의 명령을 입력한다.

winget search wget

두 개의 프로그램 목록이 뜨는데, 장치 ID가 JernejSimoncic.Wget인 것이 우리가 내려받을 것이다. 상술한 내려받기 페이지에서 찾을 수 있는 개발자 이름이 JernejSimoncic 인 것을 찾을 수 있다. 물론 원한다면 다른 것을 이용하여도 된다.

아래의 명령을 입력한다.

Winget install --id JernejSimoncic.Wget

금방 설치가 끝난다.

간단한 사용법

Wget으로 파일을 내려받는 방법은 간단하다.

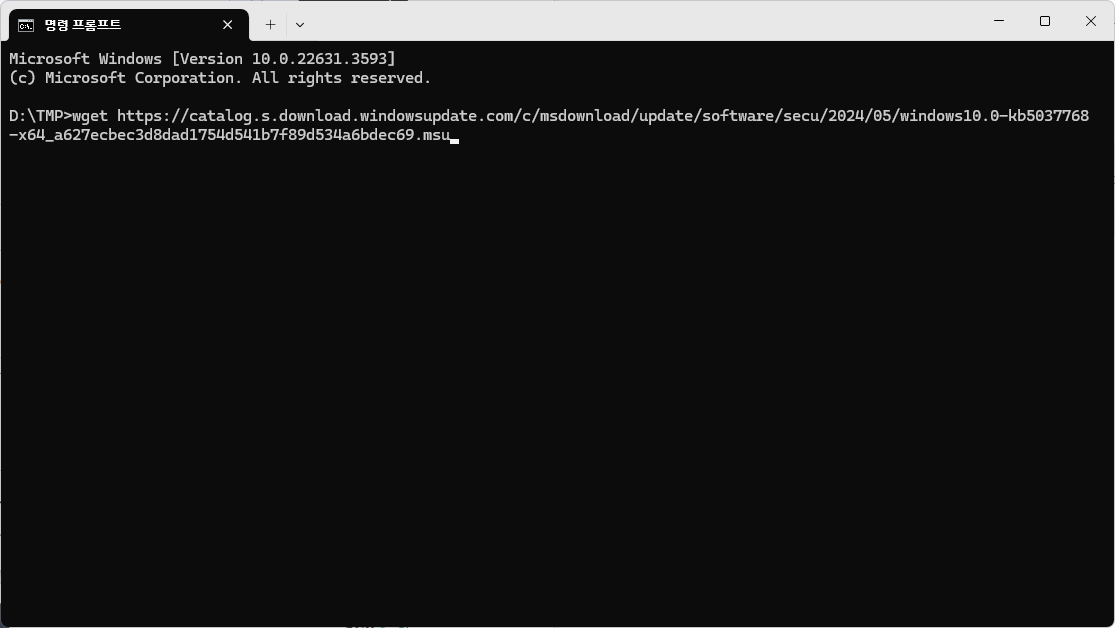

Wget "URL 주소"wget https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2024/05/windows10.0-kb5037768-x64_a627ecbec3d8dad1754d541b7f89d534a6bdec69.msu그냥 위처럼 입력해도 그냥 파일을 내려받는다. 물론 해당 주소가 유효한 주소여야 하며, 파일 이름은 기본값으로 나온다.

명령을 입력하면 아래와 같이 뜬다.

D:\TMP>wget https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2024/05/windows10.0-kb5037768-x64_a627ecbec3d8dad1754d541b7f89d534a6bdec69.msu

--2024-05-16 14:36:58-- https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2024/05/windows10.0-kb5037768-x64_a627ecbec3d8dad1754d541b7f89d534a6bdec69.msu

Resolving catalog.s.download.windowsupdate.com (catalog.s.download.windowsupdate.com)... 152.199.39.108

Connecting to catalog.s.download.windowsupdate.com (catalog.s.download.windowsupdate.com)|152.199.39.108|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 680898588 (649M) [application/octet-stream]

Saving to: 'windows10.0-kb5037768-x64_a627ecbec3d8dad1754d541b7f89d534a6bdec69.msu'

windows10 0%[ ] 4.37M 61.8KB/s eta 3h 18m

이미지에서 알 수 있듯, 파일의 이름이 변경되지 않은 상태로 저장된 것을 확인할 수 있다.

이를 변경하려면 -O 옵션을 주면 된다. 주의할 점은, -o 옵션을 주면 안 된다. 둘은 다른 옵션이다.

wget -O "저장할 파일 이름" "URL 주소"예를 들어, 파일을 "D:"에 asdf.sdf라는 이름으로 저장하고 싶다면,

wget -O "D:\asdf.sdf" "URL"과 같이 입력하면 된다.

그 외 이모저모

이 외에도, wget은 매우 강력한 기능을 많이 가지고 있다.

아래는 도움말 전문이다.

C:\Windows\System32>wget --help

GNU Wget 1.21.4, a non-interactive network retriever.

Usage: wget [OPTION]... [URL]...

Mandatory arguments to long options are mandatory for short options too.

Startup:

-V, --version display the version of Wget and exit

-h, --help print this help

-b, --background go to background after startup

-e, --execute=COMMAND execute a `.wgetrc'-style command

Logging and input file:

-o, --output-file=FILE log messages to FILE

-a, --append-output=FILE append messages to FILE

-d, --debug print lots of debugging information

-q, --quiet quiet (no output)

-v, --verbose be verbose (this is the default)

-nv, --no-verbose turn off verboseness, without being quiet

--report-speed=TYPE output bandwidth as TYPE. TYPE can be bits

-i, --input-file=FILE download URLs found in local or external FILE

--input-metalink=FILE download files covered in local Metalink FILE

-F, --force-html treat input file as HTML

-B, --base=URL resolves HTML input-file links (-i -F)

relative to URL

--config=FILE specify config file to use

--no-config do not read any config file

--rejected-log=FILE log reasons for URL rejection to FILE

Download:

-t, --tries=NUMBER set number of retries to NUMBER (0 unlimits)

--retry-connrefused retry even if connection is refused

--retry-on-host-error consider host errors as non-fatal, transient errors

--retry-on-http-error=ERRORS comma-separated list of HTTP errors to retry

-O, --output-document=FILE write documents to FILE

-nc, --no-clobber skip downloads that would download to

existing files (overwriting them)

--no-netrc don't try to obtain credentials from .netrc

-c, --continue resume getting a partially-downloaded file

--start-pos=OFFSET start downloading from zero-based position OFFSET

--progress=TYPE select progress gauge type

--show-progress display the progress bar in any verbosity mode

-N, --timestamping don't re-retrieve files unless newer than

local

--no-if-modified-since don't use conditional if-modified-since get

requests in timestamping mode

--no-use-server-timestamps don't set the local file's timestamp by

the one on the server

-S, --server-response print server response

--spider don't download anything

-T, --timeout=SECONDS set all timeout values to SECONDS

--dns-servers=ADDRESSES list of DNS servers to query (comma separated)

--bind-dns-address=ADDRESS bind DNS resolver to ADDRESS (hostname or IP) on local host

--dns-timeout=SECS set the DNS lookup timeout to SECS

--connect-timeout=SECS set the connect timeout to SECS

--read-timeout=SECS set the read timeout to SECS

-w, --wait=SECONDS wait SECONDS between retrievals

(applies if more then 1 URL is to be retrieved)

--waitretry=SECONDS wait 1..SECONDS between retries of a retrieval

(applies if more then 1 URL is to be retrieved)

--random-wait wait from 0.5*WAIT...1.5*WAIT secs between retrievals

(applies if more then 1 URL is to be retrieved)

--no-proxy explicitly turn off proxy

-Q, --quota=NUMBER set retrieval quota to NUMBER

--bind-address=ADDRESS bind to ADDRESS (hostname or IP) on local host

--limit-rate=RATE limit download rate to RATE

--no-dns-cache disable caching DNS lookups

--restrict-file-names=OS restrict chars in file names to ones OS allows

--ignore-case ignore case when matching files/directories

-4, --inet4-only connect only to IPv4 addresses

-6, --inet6-only connect only to IPv6 addresses

--prefer-family=FAMILY connect first to addresses of specified family,

one of IPv6, IPv4, or none

--user=USER set both ftp and http user to USER

--password=PASS set both ftp and http password to PASS

--ask-password prompt for passwords

--use-askpass=COMMAND specify credential handler for requesting

username and password. If no COMMAND is

specified the WGET_ASKPASS or the SSH_ASKPASS

environment variable is used.

--no-iri turn off IRI support

--local-encoding=ENC use ENC as the local encoding for IRIs

--remote-encoding=ENC use ENC as the default remote encoding

--unlink remove file before clobber

--keep-badhash keep files with checksum mismatch (append .badhash)

--metalink-index=NUMBER Metalink application/metalink4+xml metaurl ordinal NUMBER

--metalink-over-http use Metalink metadata from HTTP response headers

--preferred-location preferred location for Metalink resources

Directories:

-nd, --no-directories don't create directories

-x, --force-directories force creation of directories

-nH, --no-host-directories don't create host directories

--protocol-directories use protocol name in directories

-P, --directory-prefix=PREFIX save files to PREFIX/..

--cut-dirs=NUMBER ignore NUMBER remote directory components

HTTP options:

--http-user=USER set http user to USER

--http-password=PASS set http password to PASS

--no-cache disallow server-cached data

--default-page=NAME change the default page name (normally

this is 'index.html'.)

-E, --adjust-extension save HTML/CSS documents with proper extensions

--ignore-length ignore 'Content-Length' header field

--header=STRING insert STRING among the headers

--compression=TYPE choose compression, one of auto, gzip and none. (default: none)

--max-redirect maximum redirections allowed per page

--proxy-user=USER set USER as proxy username

--proxy-password=PASS set PASS as proxy password

--referer=URL include 'Referer: URL' header in HTTP request

--save-headers save the HTTP headers to file

-U, --user-agent=AGENT identify as AGENT instead of Wget/VERSION

--no-http-keep-alive disable HTTP keep-alive (persistent connections)

--no-cookies don't use cookies

--load-cookies=FILE load cookies from FILE before session

--save-cookies=FILE save cookies to FILE after session

--keep-session-cookies load and save session (non-permanent) cookies

--post-data=STRING use the POST method; send STRING as the data

--post-file=FILE use the POST method; send contents of FILE

--method=HTTPMethod use method "HTTPMethod" in the request

--body-data=STRING send STRING as data. --method MUST be set

--body-file=FILE send contents of FILE. --method MUST be set

--content-disposition honor the Content-Disposition header when

choosing local file names (EXPERIMENTAL)

--content-on-error output the received content on server errors

--auth-no-challenge send Basic HTTP authentication information

without first waiting for the server's

challenge

HTTPS (SSL/TLS) options:

--secure-protocol=PR choose secure protocol, one of auto, SSLv2,

SSLv3, TLSv1, TLSv1_1, TLSv1_2, TLSv1_3 and PFS

--https-only only follow secure HTTPS links

--no-check-certificate don't validate the server's certificate

--certificate=FILE client certificate file

--certificate-type=TYPE client certificate type, PEM or DER

--private-key=FILE private key file

--private-key-type=TYPE private key type, PEM or DER

--ca-certificate=FILE file with the bundle of CAs

--ca-directory=DIR directory where hash list of CAs is stored

--crl-file=FILE file with bundle of CRLs

--pinnedpubkey=FILE/HASHES Public key (PEM/DER) file, or any number

of base64 encoded sha256 hashes preceded by

'sha256//' and separated by ';', to verify

peer against

--random-file=FILE file with random data for seeding the SSL PRNG

--ciphers=STR Set the priority string (GnuTLS) or cipher list string (OpenSSL) directly.

Use with care. This option overrides --secure-protocol.

The format and syntax of this string depend on the specific SSL/TLS engine.

HSTS options:

--no-hsts disable HSTS

--hsts-file path of HSTS database (will override default)

FTP options:

--ftp-user=USER set ftp user to USER

--ftp-password=PASS set ftp password to PASS

--no-remove-listing don't remove '.listing' files

--no-glob turn off FTP file name globbing

--no-passive-ftp disable the "passive" transfer mode

--preserve-permissions preserve remote file permissions

--retr-symlinks when recursing, get linked-to files (not dir)

FTPS options:

--ftps-implicit use implicit FTPS (default port is 990)

--ftps-resume-ssl resume the SSL/TLS session started in the control connection when

opening a data connection

--ftps-clear-data-connection cipher the control channel only; all the data will be in plaintext

--ftps-fallback-to-ftp fall back to FTP if FTPS is not supported in the target server

WARC options:

--warc-file=FILENAME save request/response data to a .warc.gz file

--warc-header=STRING insert STRING into the warcinfo record

--warc-max-size=NUMBER set maximum size of WARC files to NUMBER

--warc-cdx write CDX index files

--warc-dedup=FILENAME do not store records listed in this CDX file

--no-warc-compression do not compress WARC files with GZIP

--no-warc-digests do not calculate SHA1 digests

--no-warc-keep-log do not store the log file in a WARC record

--warc-tempdir=DIRECTORY location for temporary files created by the

WARC writer

Recursive download:

-r, --recursive specify recursive download

-l, --level=NUMBER maximum recursion depth (inf or 0 for infinite)

--delete-after delete files locally after downloading them

-k, --convert-links make links in downloaded HTML or CSS point to

local files

--convert-file-only convert the file part of the URLs only (usually known as the basename)

--backups=N before writing file X, rotate up to N backup files

-K, --backup-converted before converting file X, back up as X.orig

-m, --mirror shortcut for -N -r -l inf --no-remove-listing

-p, --page-requisites get all images, etc. needed to display HTML page

--strict-comments turn on strict (SGML) handling of HTML comments

Recursive accept/reject:

-A, --accept=LIST comma-separated list of accepted extensions

-R, --reject=LIST comma-separated list of rejected extensions

--accept-regex=REGEX regex matching accepted URLs

--reject-regex=REGEX regex matching rejected URLs

--regex-type=TYPE regex type (posix|pcre)

-D, --domains=LIST comma-separated list of accepted domains

--exclude-domains=LIST comma-separated list of rejected domains

--follow-ftp follow FTP links from HTML documents

--follow-tags=LIST comma-separated list of followed HTML tags

--ignore-tags=LIST comma-separated list of ignored HTML tags

-H, --span-hosts go to foreign hosts when recursive

-L, --relative follow relative links only

-I, --include-directories=LIST list of allowed directories

--trust-server-names use the name specified by the redirection

URL's last component

-X, --exclude-directories=LIST list of excluded directories

-np, --no-parent don't ascend to the parent directory

Email bug reports, questions, discussions to <bug-wget@gnu.org>

and/or open issues at https://savannah.gnu.org/bugs/?func=additem&group=wget.보다시피 수많은 유용한 기능들이 들어있는 것을 확인할 수 있다.

상술한 -O 옵션이나, -c 옵션(--continue, 다운로드가 중단된 파일을 이어서 받을 수 있는 옵션)이나 -q옵션(--quiet, 출력창을 띄우지 않는 옵션) 같은 경우는 유용하니 외워두면 유용하다.

'컴퓨터 > Windows' 카테고리의 다른 글

| Windows에서 시계 추가하기 (0) | 2024.12.01 |

|---|---|

| 동적 확장 VHD의 실제(물리적인) 크기를 줄이는 방법(DISKPART의 COMPACT 명령) (0) | 2024.10.01 |

| expand-vdisk를 이용하여 가상 디스크 파일의 최대 용량 늘리기 (0) | 2024.09.01 |

| Winget에서 특정 버전의 패키지를 설치하기 (0) | 2024.08.01 |

| Windows에서 OpenSSH를 이용하여 SSH 키 쌍 생성하기 (0) | 2024.07.01 |

댓글